Bias in AI Decisions – Causes and Countermeasures

Ludwig Brummer from Alexander Thamm GmbH looks at where bias in AI decisions can come from and how to deal with algorithmic bias in practice.

© treety | istockphoto.com

AI is used to automate more and more decisions. With applications like credit scoring, application screening, fraud detection that impact many lives, avoiding any type of discrimination is key from both ethical and legal perspectives.

In its AI strategy, the German government has paid explicit attention to the issue of bias in the context of the use of AI processes. The General Data Protection Regulation (GDPR) also states that in automated decisions, discriminatory effects based on racial or ethnic origin, political opinion, religion or belief, trade union membership, genetic or health status, or sexual orientation (sensitive information) must be prevented.

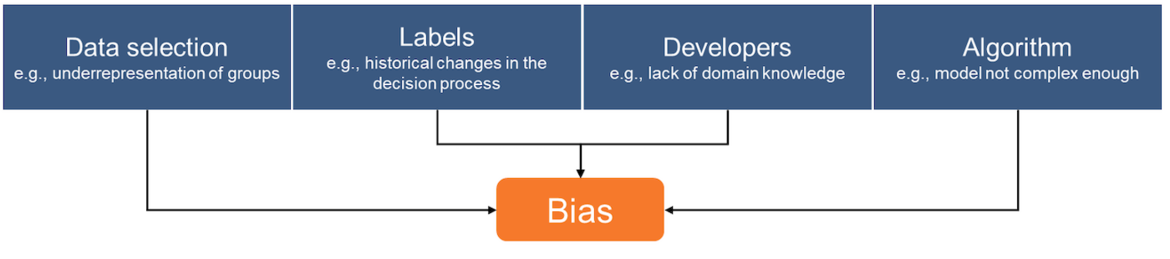

Causes for Bias in AI Decisions – © Alexander Thamm GmbH

Decisions can be biased regardless of their source. However, decisions from algorithms are more traceable than human-made decisions. This allows developers to make biases more visible and ensure fairness. Bias can be defined as systematic and repeatable errors in any decision system. It can either lead to a degradation of decision quality or to unfair or discriminating results like favoring an arbitrary user group. While the avoidance of bias to improve model quality is well established, the ethical use of AI is an active research topic.

Bias can have several sources: the data selection, the target variable (label), the developers, and the model itself.

AI systems do not have a natural understanding of the objectivity of the data being processed. If a bias is present in the data, it will be adopted by the model. Algorithms are written by humans who are naturally biased. Additionally, the method used may have a bias, for example, if it is inappropriate for application to the specific problem.

For example, in the allocation of public housing, bias could have these causes:

Data selection: There used to be fewer single fathers, so they are underrepresented in the data. Their requests are not handled correctly.

Labels: The allocation process has changed in the past. Therefore, historical data does not represent the current allocation process. The model quality decreases.

Developer(s): The developer does not have children and therefore does not sufficiently consider information on family size.

Algorithm: The relationship between target and input variables is too complex for the model used (underfitting). This can be circumvented by using more complex models.

Handling Bias in Practice

Optimizing fairness in models often contradicts optimizing model quality. Therefore, awareness of bias and a definition of fairness is an essential task in each project since a model can be fair by one definition and unfair by another. Only then can the decision process be analyzed for bias and fairness.

Bias in data can be detected and corrected by analyzing the data basis. This includes outlier analyses, changes in dependencies in the data over time, or simply plotting variables separated into suitable groups, e.g., the target variable distribution for all genders.

To build a fair model, it is not enough to omit sensitive information as input variables because other influence variables can be stochastically dependent on sensitive information.

A reference dataset allows further analysis of fairness. The ideal reference data set contains all model-relevant information and all sensitive information at the frequency expected in production. By applying the model to this dataset, hidden biases can be made visible, e.g., discrimination against minorities, even though ethnic background is not part of the model inputs.

There are specialized libraries (e.g., AIF360, fairlearn) designed to compute fairness measures and thereby detect biases in models. These assume that the dataset used contains sensitive information. They also provide methods to reduce bias.

Error analysis of the model outputs allows one to find data samples where the model has difficulty. This often helps to find underrepresented groups and can be done by looking at samples where the model has chosen the wrong class with a high degree of confidence.

During the model life cycle, it is important to monitor the model and allow users to retrace the reasons for model decisions. This works approximately for more complex models through Explainable-AI methods such as SHAP-values and helps to uncover convoluted biases (real example: discrimination against candidates from minority communities by using the length of commute in hiring decisions).

If the training data is not sufficiently close to data seen in production, additional data can be collected and incorporated into the model. If this is impossible, upsampling, data augmentation, or downsampling can be used to create a better representation.

Not every bias is bad: By deliberately introducing a counter-bias, a known bias can be counteracted. For example, in automatic applicant screening, an underrepresented minority could receive bonus points in the scoring if a minimum representation quota is to be met in the future.

Conclusion

Analyzing for bias, especially discrimination, is not only ethically and legally required when automated decisions affect people. In practice, doing so often generates many additional insights that improve performance, transparency, and monitoring quality and thus the overall decision process, even if performance and fairness are contradicting objectives in theory. Lastly, in case things get serious, it also helps in court.

Ludwig Brummer is a Senior Data Scientist and team lead at Alexander Thamm GmbH since 2015. He implemented a variety of use cases in energy, insurance, mechanical engineering, and banking such as credit scoring, automated claim settlement, or maintenance automation. For him, decision automation is an opportunity to make decisions not only faster and cheaper but also fairer for all users.

Please note: The opinions expressed in Industry Insights published by dotmagazine are the author’s own and do not reflect the view of the publisher, eco – Association of the Internet Industry.